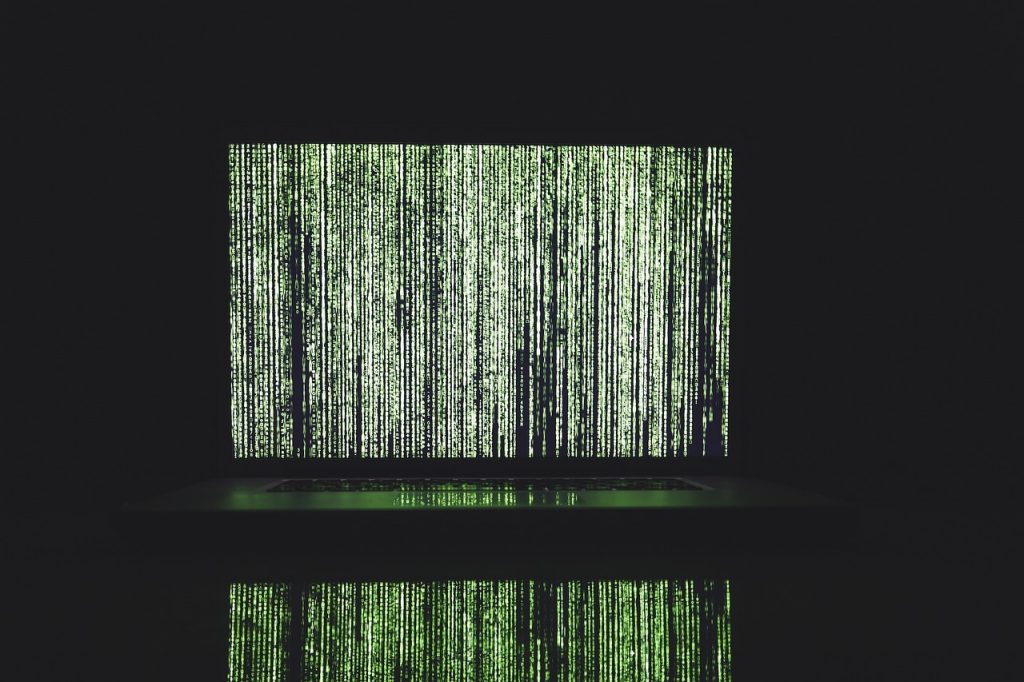

There was an excellent article by Andrew Smith in the Guardian newspaper last week. 'Franken-algorithms: the deadly consequences of unpredictable code', examines issues with our "our new algorithmic reality and the "growing conjecture that current programming methods are no longer fit for purpose given the size, complexity and interdependency of the algorithmic systems we increasingly rely on." "Between the “dumb” fixed algorithms and true AI lies the problematic halfway house we’ve already entered with scarcely a thought and almost no debate, much less agreement as to aims, ethics, safety, best practice", Smith says.

There was an excellent article by Andrew Smith in the Guardian newspaper last week. 'Franken-algorithms: the deadly consequences of unpredictable code', examines issues with our "our new algorithmic reality and the "growing conjecture that current programming methods are no longer fit for purpose given the size, complexity and interdependency of the algorithmic systems we increasingly rely on." "Between the “dumb” fixed algorithms and true AI lies the problematic halfway house we’ve already entered with scarcely a thought and almost no debate, much less agreement as to aims, ethics, safety, best practice", Smith says.

I was particularly interested in the changing understandings of what an algorithm is.

In the original understanding of an algorithm, says Andrew Smith, "an algorithm is a small, simple thing; a rule used to automate the treatment of a piece of data. If a happens, then do b; if not, then do c. This is the “if/then/else” logic of classical computing. If a user claims to be 18, allow them into the website; if not, print “Sorry, you must be 18 to enter”. At core, computer programs are bundles of such algorithms." However, "Recent years have seen a more portentous and ambiguous meaning emerge, with the word “algorithm” taken to mean any large, complex decision-making software system; any means of taking an array of input – of data – and assessing it quickly, according to a given set of criteria (or “rules”)."

And this, of course is a problem, especially where algorithms, even if published, are not in the least transparent and with machine learning, constantly evolving.